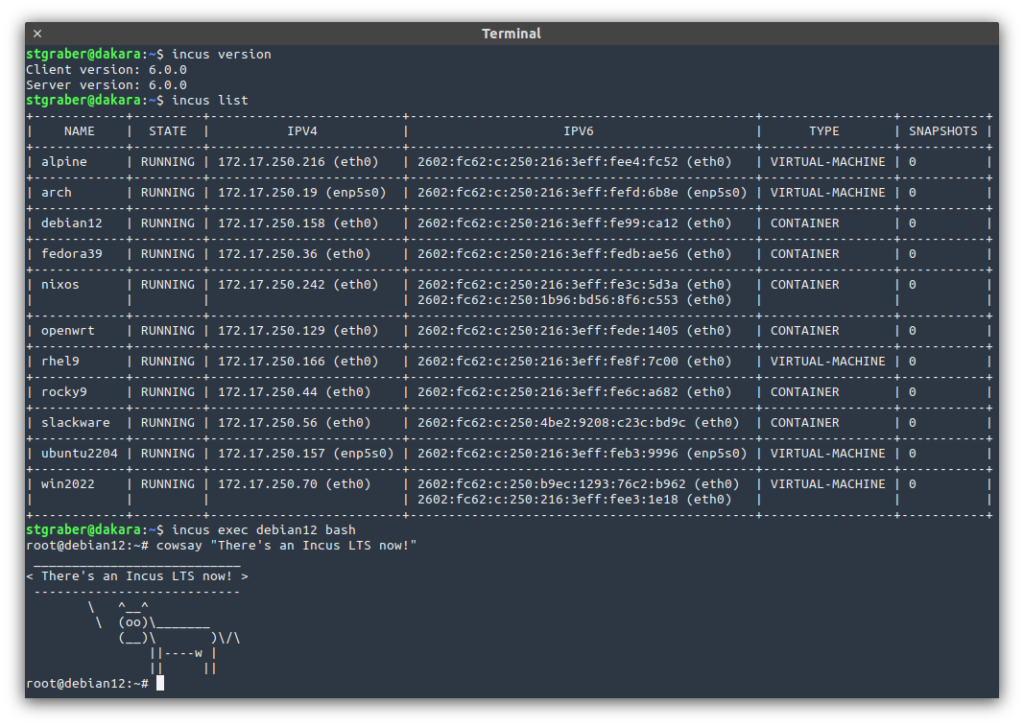

And it’s finally out, our first LTS (Long Term Support) release of Incus!

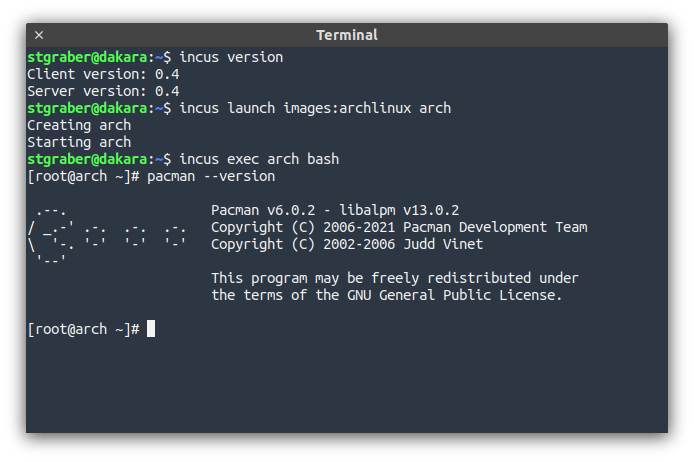

For anyone unfamiliar, Incus is a modern system container and virtual machine manager developed and maintained by the same team that first created LXD. It’s released under the Apache 2.0 license and is run as a community led Open Source project as part of the Linux Containers organization.

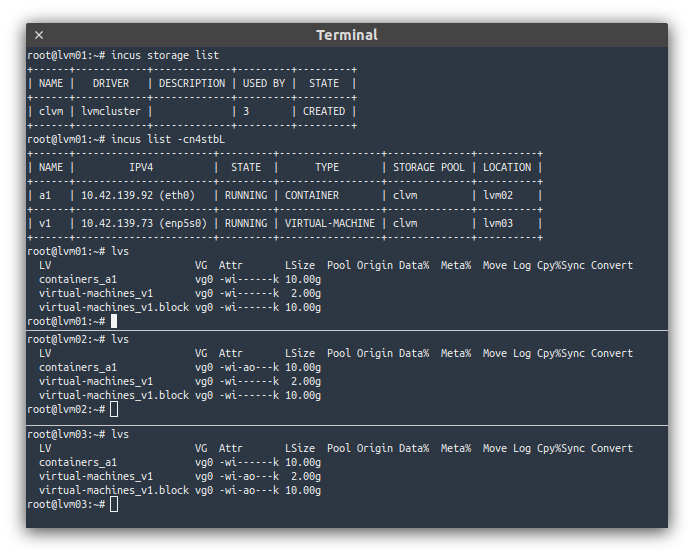

Incus provides a cloud-like environment, creating instances from premade images and offers a wide variety of features, including the ability to seamlessly cluster up to 50 servers together.

It supports multiple different local or remote storage options, traditional or fully distributed networking and offers most common cloud features, including a full REST API and integrations with common tooling like Ansible, Terraform/OpenTofu and more!

The LTS release of Incus will be supported until June 2029 with the first two years featuring bug and security fixes as well as minor usability improvements before transitioning to security fixes only for the remaining 3 years.

The highlights for existing Incus users are:

- Swap limits for containers

- New shell completion mechanism

- Creation of external bridge interfaces

- Live-migration of VMs with disks attached

- System information in

incus info --resources - USB information in

incus info --resources

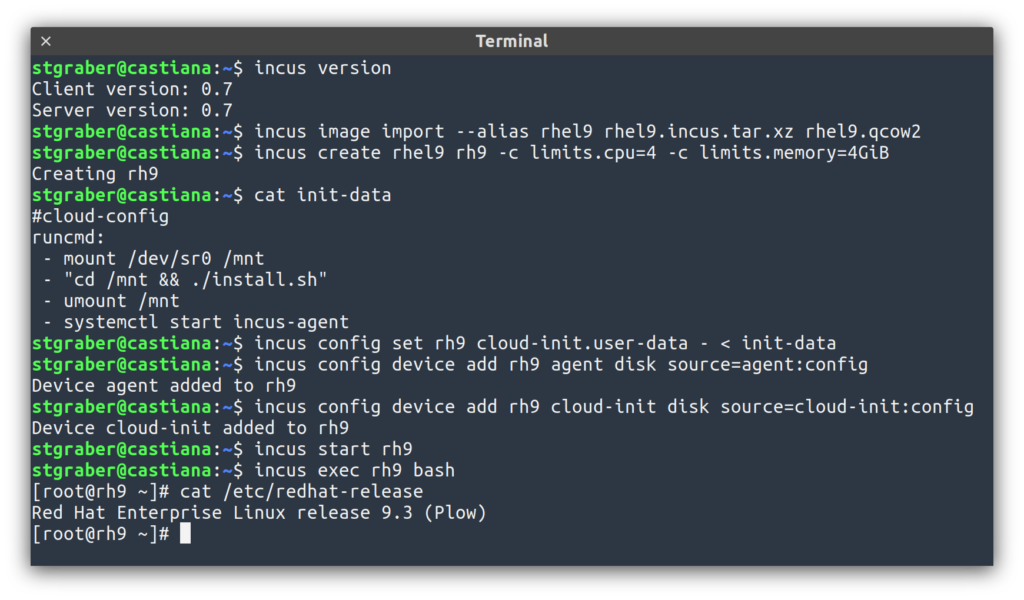

For those coming from LXD 5.0 LTS, a full list of changes is included in the announcement as well as some instructions on how to migrate over.

The full announcement and changelog can be found here.

And for those who prefer videos, here’s the release overview video:

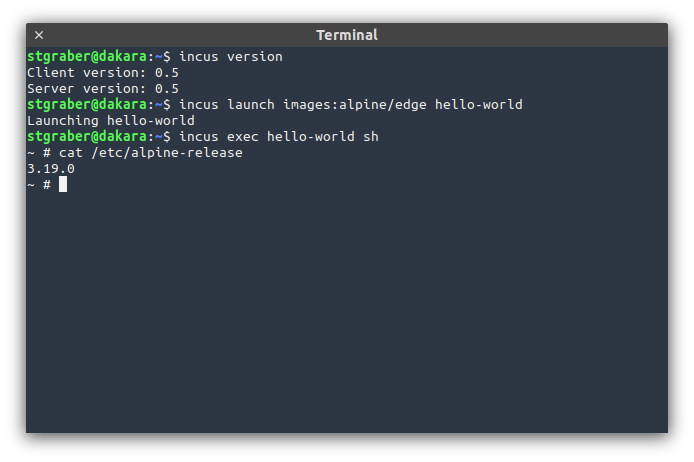

You can take the latest release of Incus up for a spin through our online demo service at: https://linuxcontainers.org/incus/try-it/

And as always, my company is offering commercial support on Incus, ranging from by-the-hour support contracts to one-off services on things like initial migration from LXD, review of your deployment to squeeze the most out of Incus or even feature sponsorship. You’ll find all details of that here: https://zabbly.com/incus

Donations towards my work on this and other open source projects is also always appreciated, you can find me on Github Sponsors, Patreon and Ko-fi.

Enjoy!

Github

Github Twitter

Twitter LinkedIn

LinkedIn Mastodon

Mastodon