The Incus team is pleased to announce the release of Incus 6.8!

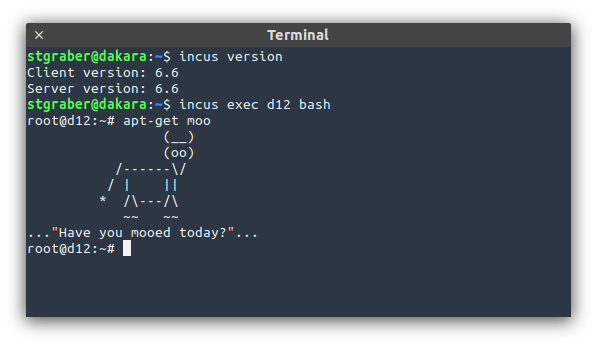

This is the last release for 2024 but it still packs a punch with a bunch of VM related improvements, including the ability to move a running VM between storage pools, a new authorization backend, improvements to volume handling for application containers and more.

The highlights for this release are:

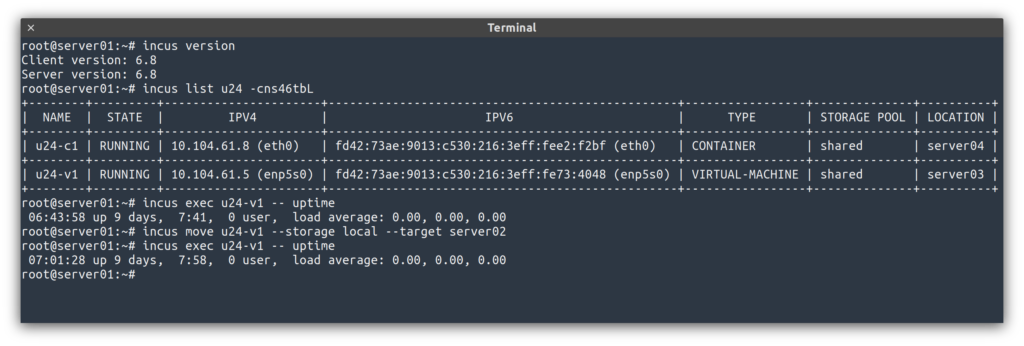

- Storage live migration for VMs

- Authorization scriptlet

- Console screenshots for VMs

- Initial owner and mode for custom storage volumes

- Small updates to the OpenFGA model

- Image alias reuse on import

- New incus-simplestreams prune command

- Console access locking

The full announcement and changelog can be found here.

And for those who prefer videos, here’s the release overview video:

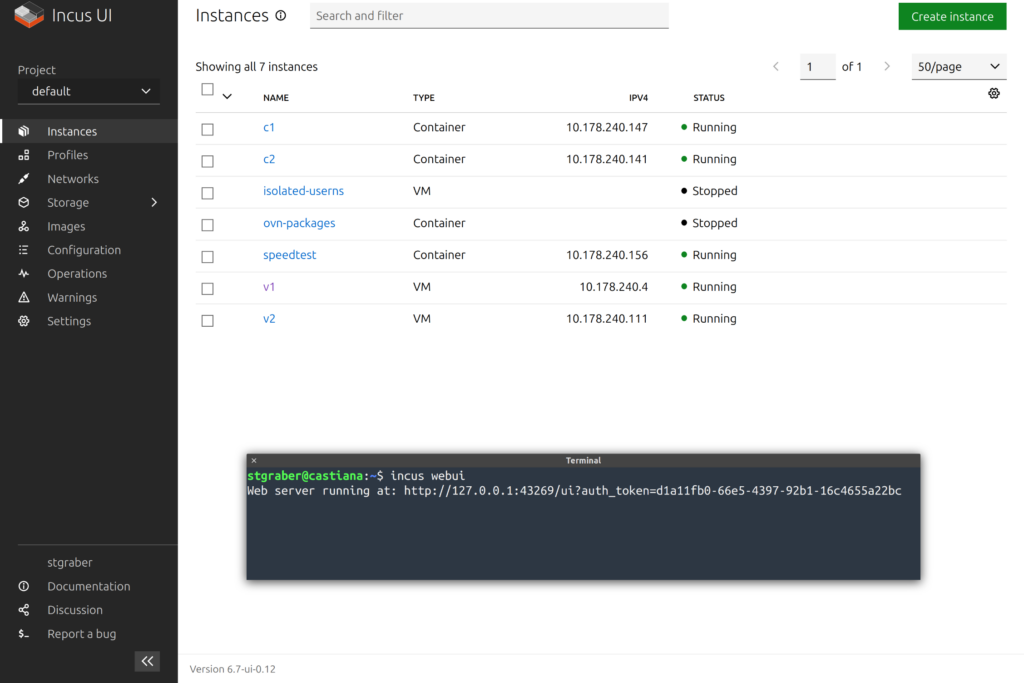

You can take the latest release of Incus up for a spin through our online demo service at: https://linuxcontainers.org/incus/try-it/

Some of the Incus maintainers will be present at FOSDEM 2025, helping run both the containers and kernel devrooms. For those arriving in town early, there will be a “Friends of Incus” gathering sponsored by FuturFusion on Thursday evening (January 30th), you can find the details of that here.

And as always, my company is offering commercial support on Incus, ranging from by-the-hour support contracts to one-off services on things like initial migration from LXD, review of your deployment to squeeze the most out of Incus or even feature sponsorship. You’ll find all details of that here: https://zabbly.com/incus

Donations towards my work on this and other open source projects is also always appreciated, you can find me on Github Sponsors, Patreon and Ko-fi.

Enjoy!

Github

Github Twitter

Twitter LinkedIn

LinkedIn Mastodon

Mastodon