The Incus team is pleased to announce the release of Incus 6.13!

This is a VERY busy release with a lot of new features of all sizes

and for all kinds of different users, so there should be something for

everyone!

The highlights for this release are:

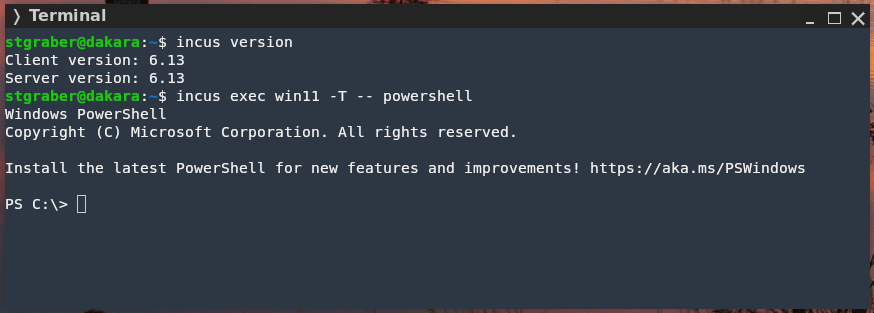

- Windows agent support

- Improvements to incus-migrate

- SFTP on custom volumes

- Configurable instance external IP address on OVN networks

- Ability to pin gateway MAC address on OVN networks

- Clock handling in virtual machines

- New get-client-certificate and get-client-token commands

- DHCPv6 support for OCI

- Network host tables configuration for routed NICs

- Support for split image publishing

- Preseed of certificates

- Configuration of list format in the CLI

- Add CLI aliases for create/add and delete/remove/rm

- OS metrics are now included in Incus metrics when running on Incus OS

- Converted more database logic to generated code

- Converted more CLI list functions to using server side filtering

- Converted more documentation to be generated from the code

The full announcement and changelog can be found here.

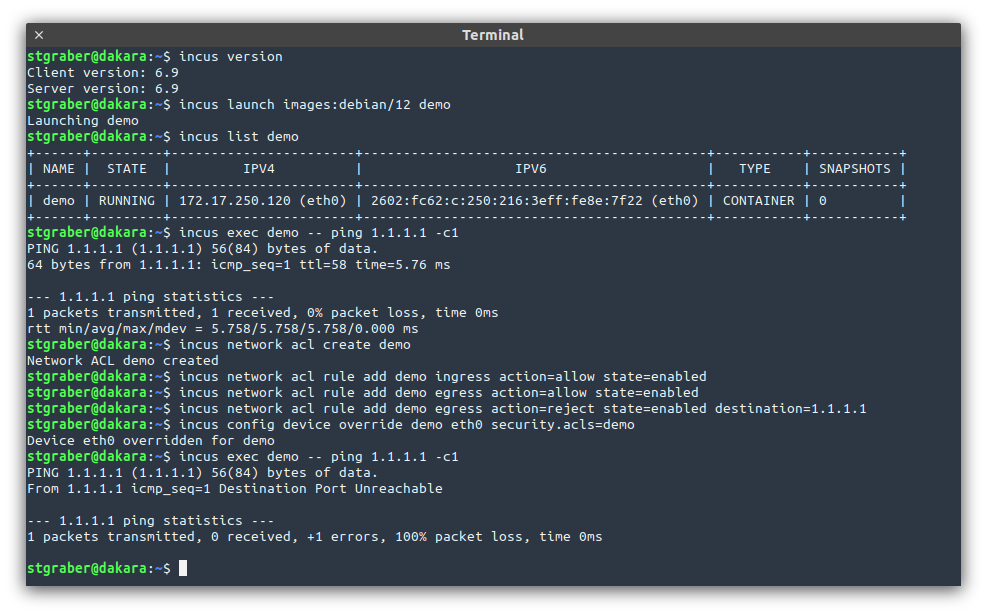

And for those who prefer videos, here’s the release overview video:

You can take the latest release of Incus up for a spin through our online demo service at: https://linuxcontainers.org/incus/try-it/

And as always, my company is offering commercial support on Incus, ranging from by-the-hour support contracts to one-off services on things like initial migration from LXD, review of your deployment to squeeze the most out of Incus or even feature sponsorship. You’ll find all details of that here: https://zabbly.com/incus

Donations towards my work on this and other open source projects is also always appreciated, you can find me on Github Sponsors, Patreon and Ko-fi.

Enjoy!

Github

Github Twitter

Twitter LinkedIn

LinkedIn Mastodon

Mastodon