The Incus team is pleased to announce the release of Incus 6.21!

We’re starting 2026 with a couple of security fixes, but that’s not all, we’re also introducing some long requested CLI improvements, made SR-IOV easier to use with network cards, improved startup performance and more!

This release includes two security fixes:

- CVE-2026-23953 (Newline injection in environment variable)

- CVE-2026-23954 (Arbitrary file read/write through templates)

On the feature front, the highlights for this release are:

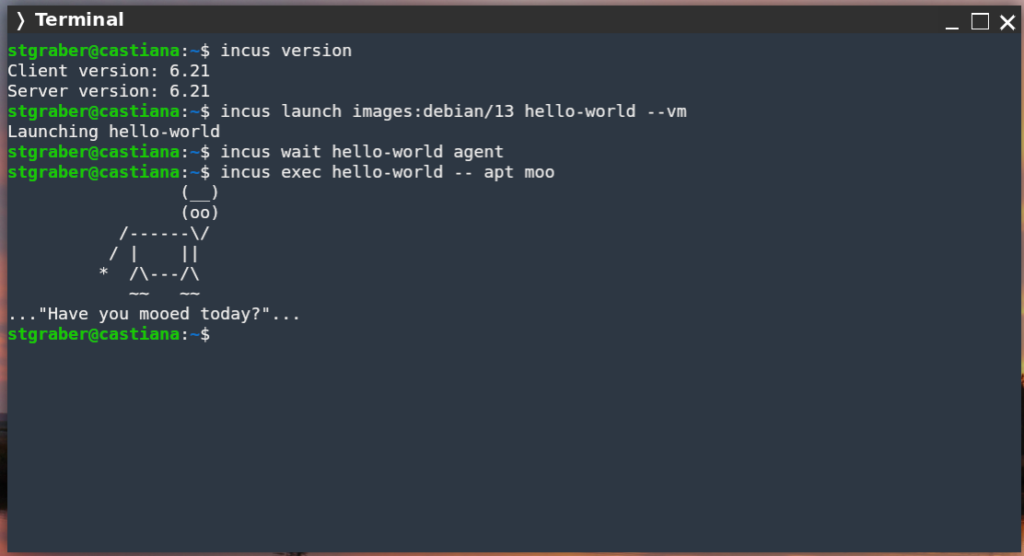

- New “incus wait” command

- Automatic SR-IOV network VF selection

- Support for detaching and disconnecting network interfaces

- Parallel instance startup

- Source subnet restrictions through OIDC claims

- Better DNS SOA handling in network zones

- Forceful (recursive) directory deletion in file REST API

The full announcement and changelog can be found here.

And for those who prefer videos, here’s the release overview video:

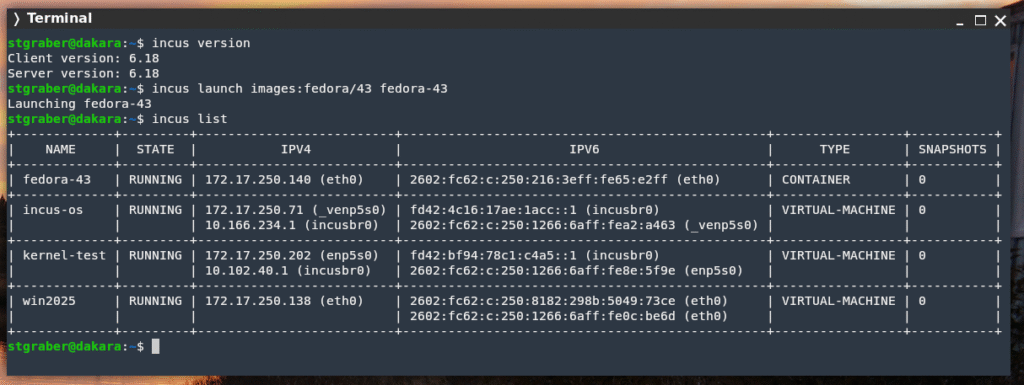

You can take the latest release of Incus up for a spin through our online demo service at: https://linuxcontainers.org/incus/try-it/

And as always, my company is offering commercial support on Incus, ranging from by-the-hour support contracts to one-off services on things like initial migration from LXD, review of your deployment to squeeze the most out of Incus or even feature sponsorship. You’ll find all details of that here: https://zabbly.com/incus

Donations towards my work on this and other open source projects is also always appreciated, you can find me on Github Sponsors, Patreon and Ko-fi.

Enjoy!

Github

Github Twitter

Twitter LinkedIn

LinkedIn Mastodon

Mastodon